Imagine going to the doctor in a country where you don’t speak the language well. The doctor uses an AI translation app to communicate with you, but maybe you only notice the awkward grammar in the translation. Or worse, you feel hesitant to use the app because you don’t want to share any personal details. This is the current reality for over 25 million people in the U.S. with limited English proficiency (LEP).

AI health tools are increasingly being deployed to support underserved populations, but most are designed without user needs and contexts in mind. Improving translation alone won’t address most barriers that these LEP communities face.

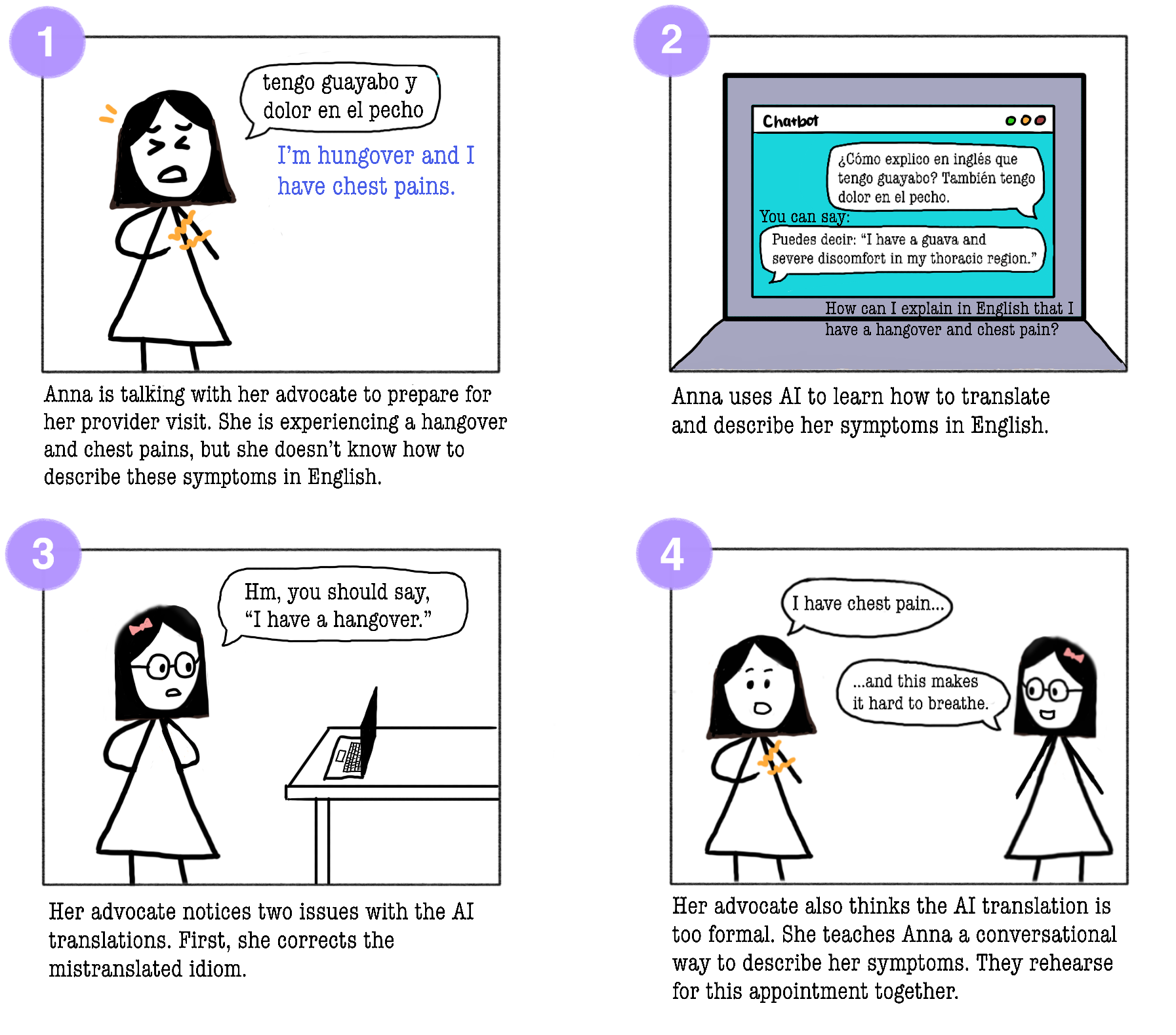

We interviewed 14 patient navigators (professionals who support care-seeking processes and often serve as a bridge between patients and providers, including patient advocates, caseworkers, interpreters, health liaisons, etc) who support Spanish-speaking patients in the state of Illinois. We asked navigators what would happen if their patients used AI for medical information. To do so, we showed 6 storyboards that illustrated potential AI health scenarios.

Example storyboard used in interviews with navigators

Example storyboard used in interviews with navigators

What we found was that the barriers LEP patients face in using health technologies go far deeper than just needing better translation. We found four key challenges that any AI system would have to address:

| 🗣️ Language Even within "Spanish", there are dialects and Indigenous languages that interpreters can't bridge. |

🌎 Culture Patients hide home remedies from doctors due to fear of judgment. Many are hesitant to correct or ask for clarification from providers. |

| 🔡 Literacy Many patients can't read in any language or lack digital literacy skills due to limited experience with usable devices. |

🔒 Privacy Immigrants, or those related to immigrants, are wary of systems that might compromise their data. Many prefer in-person, paper-based, or other methods that leave minimal record. |

So can AI help LEP patients?

AI may not be the solution - potential disadvantages or risks include:

⚠️ Many patients do not already consistently integrate technology into their information-seeking or communication routines, so adopting AI systems would require additional skills and overhead

⚠️ AI should not replace or inhibit human relationships

⚠️ Patients may not know how to validate information from AI

“If they don’t trust banks or the government, they surely won’t ask AI for help.”

— P5, community navigator

However, navigators were optimistic about AI opportunities, if developed properly:

💡 AI can reduce embarrassment of asking for help, whether it be a personal symptom or repeatedly asking for clarification

💡 AI can support overburdened clinics and medical personnel

💡 AI can be a timely, personalized resource

“If you tell someone about your feelings or doctor’s advice, embarrassment or judgment might hold you back. AI removes that social judgment.”

— P2, patient advocate

[!IMPORTANT] Key Takeaway: Before building AI, ask whether a simpler solution would work just as well: a printed FAQ, a shared audio file, a better-trained navigator, etc. If not, and AI genuinely adds value without being too disruptive, then build it with context in mind: privacy-protecting, offline-capable, culturally aware, and designed to strengthen the human relationships patients already trust.

Read the full paper here!

📄 Designing Beyond Language: Sociotechnical Barriers in AI Health Technologies for Limited English Proficiency

Michelle Huang, Violeta J. Rodríguez, Koustuv Saha, Tal August

CHI 2026 · Barcelona, Spain · April 13–17, 2026